The Illusion of the Algorithmic Gadfly

Why AI Cannot Be Your Socratic Tutor

One of the most persistent claims in educational technology circles is that generative AI can function as a “Socratic tutor.” The pitch is seductive: a tireless, endlessly patient conversational partner that guides students through probing questions, available at any hour, free of the social pressures that make rigorous inquiry intimidating. Companies market this vision aggressively. Researchers publish papers testing it empirically. And a growing number of educators have begun to wonder whether the ancient art of philosophical midwifery might finally be scalable.

What strikes me most about this idea is the gap between what the Socratic method actually requires and what AI can deliver. The claim that a large language model can perform Socratic inquiry rests on a structural misunderstanding of both the method’s philosophical architecture and the ontological nature of the technology. It confuses the syntactic generation of question-shaped sentences with the deeply relational, ethically grounded practice of guiding a human being toward self-knowledge. This distinction matters, and it matters urgently, because getting it wrong has consequences for how we design learning experiences in the age of generative intelligence.

What the Socratic Method Requires

To evaluate whether AI can perform Socratic inquiry, we first need to understand what that inquiry involves. The Socratic method, as documented across Plato’s dialogues, operates through four interconnected components. Elenchus is the art of refutation: systematically testing the logical consistency of a person’s stated beliefs to dismantle false assumptions. Aporia is the state of productive perplexity that follows, the unsettling recognition that one’s knowledge is inadequate. Maieutics is the midwifery of knowledge, the careful guidance that helps a learner give birth to understanding they already carry within themselves. And dialectic is the collaborative synthesis where two minds engage in reasoned discourse to approach deeper truth.

Each of these components depends on capacities that are specifically relational and specifically human. Elenchus requires trust between participants; a student must feel safe enough to endure intellectual deconstruction without shutting down. Aporia demands that the guide possess acute emotional intelligence to navigate the line between productive discomfort and harmful humiliation. Maieutics requires empathy and attunement to the learner’s psychological timing. And dialectic presupposes a mutual, conscious commitment to truth-seeking.

Underpinning all four pillars is what ancient Greek philosophers called epimeleia heautou, the “care of the self” or “care of the soul.” As Michel Foucault analyzed extensively, the Socratic tutor does not merely transfer information. The tutor cares for the spiritual and intellectual development of the student. The educational interaction is an anthropological process aimed at the transformation of the individual’s way of life. Emotional support, tailored feedback, and ethical awareness are the operational core of the Socratic method, not peripheral benefits that happen to accompany it.

Socrates Would Not Have Been Impressed

Proponents sometimes argue that if Socrates were alive today, he would embrace AI tutoring. This claim fundamentally misreads his deepest epistemological commitments. Long before digital neural networks, Socrates confronted what was, in his time, a disruptive information technology: writing.

In Plato’s Phaedrus, Socrates refers to the myth of the Egyptian god Theuth, inventor of writing, and King Thamus. When Theuth presents writing as a technology that will make humanity wiser, Thamus delivers a devastating rebuttal: writing will implant forgetfulness in human souls, as people will cease to exercise their internal memory, relying instead on “external marks.” Socrates elaborates that writing offers only the appearance of wisdom, comparing written texts to paintings that “stand like living beings, but if one asks them a question, they preserve a solemn silence.” A text cannot defend itself by argument. It cannot adapt its message to the reader’s needs. It is, in essence, dead speech.

For Socrates, genuine knowledge requires “living speech” cultivated through dialectic. He compares the philosopher to a farmer planting seeds, choosing proper soil and sowing discourse capable of helping itself. The true dialectician plants intellectual seeds that require the real-time presence, adaptability, and ethical judgment of a human educator who can assess the soil of the student’s soul.

Generative AI is the ultimate extrapolation of what Socrates critiqued. It is a probabilistic text generation engine that mimics interactivity while remaining fundamentally external to the soul. If ancient writing was “dead speech,” the output of an LLM might be characterized as something like “undead speech.” It possesses the simulated animation of a living dialogue but remains ontologically hollow. When users outsource their reasoning and creative articulation to AI, they risk precisely what Socrates feared regarding writing: the erosion of innate cognitive capacities, leading to superficial comprehension and a dangerous illusion of mastery.

The Case for the Defense

I need to acknowledge that the academic literature supporting AI Socratic tutoring is not without merit. Researchers have identified real affordances worth taking seriously.

A quasi-experimental study with 65 pre-service biology teacher students in Germany found that students using an AI-based Socratic tutor reported significantly greater support for critical, independent, and reflective thinking compared to a control group using a standard AI chatbot. The dialogic AI stimulated metacognitive engagement by prompting learners to reframe and refine their questions continuously rather than offering pre-formulated answers.

There is also the matter of psychological safety. A mixed-methods study involving 230 university students in Taiwan showed that a significant portion of learners deeply appreciates AI’s non-judgmental nature and its continuous accessibility. Experiencing aporia can be genuinely intimidating when mediated by a human authority figure. Students fear humiliation and negative evaluation when their logic is systematically dismantled. An AI tutor, devoid of social judgment, can provide a lower-friction environment for hypothesis, failure, and iteration.

Beyond human-facing tutoring, computer scientists use Socratic principles to improve the internal reasoning of LLMs themselves. In multi-agent frameworks like SocraSynth, two or more LLM agents argue opposing positions, using cross-examination to expose logical inconsistencies and factual errors. This application transforms opaque probabilistic sampling into interpretable reasoning trajectories, improving the coherence and accuracy of AI outputs.

These findings are genuine and useful. I do not dismiss them. The question is whether they represent evidence that AI can perform the Socratic method, or whether they show something more modest: that question-prompting interfaces produce better learning outcomes than passive information delivery. The latter claim is far less controversial and far less philosophically loaded.

The Ontological Problem

My deeper critique of the AI Socratic tutor is not technical but ontological. It concerns what these systems are, not what they can currently do.

LLMs synthesize language based on probabilistic distributions and token associations learned from vast training data. They possess no semantic comprehension, no intentionality, and no lived experience. When an AI deploys elenchus, asking a user to define their terms or pointing out a logical flaw, it does so by executing a sequence of tokens probabilistically optimized to resemble a probing question. It acts from no philosophical commitment to uncovering truth and no desire to elevate the user’s intellect.

I have written at length in a previous essay about the “stochastic parrot” metaphor and its limitations as a description of what LLMs actually do. As I argued there, the relationship between human cognition and machine prediction is more complex than the parrot label suggests. The predictive coding framework in neuroscience reveals uncomfortable parallels between how brains and language models process information, and mechanistic interpretability research has shown that LLMs develop emergent internal representations that function as genuine world models. The binary distinction between “real understanding” and “mere parroting” oversimplifies the science.

But here is the crucial point: even granting that LLMs possess something more sophisticated than surface-level statistical correlation, the Socratic method demands capacities that go beyond prediction and representation. It demands aware, intentional engagement and genuine otherness. Thinkers such as Martin Buber and Emmanuel Levinas have emphasized that authentic dialogue requires the presence of an “Other,” a distinct, independent consciousness whose irreducibility challenges and shapes the self. An AI is not an “Other.” It is an algorithmic mirror reflecting aggregated human data back onto the user. Interacting with it is, at its philosophical core, a solipsistic exercise.

This absence of genuine otherness strikes at the heart of the Socratic goal. An algorithmic entity cannot care for a soul because it does not possess one. The emotional attunement and ethical awareness that empirical studies show students explicitly requesting from their tutors are not features to be added in a future software update. They are prerequisites that no amount of parameter scaling will satisfy.

There is also an epistemological tension between Socratic ignorance and AI hallucination. The bedrock of the Socratic method is the conscious recognition of the limits of one’s own knowledge: gnôthi seauton, know thyself. Socrates’ legendary wisdom stemmed from his awareness that he knew nothing. Generative AI operates in direct opposition to this principle. When an LLM hallucinates, it generates false information with the same linguistic confidence as factual truth. It cannot “know that it does not know.” An AI tutor therefore cannot model the foundational intellectual virtue it is supposed to teach.

What This Means for Assessment

If AI cannot serve as a genuine Socratic tutor, the implications for educational design become clear. The dialogic capacities that define Socratic inquiry, including real-time reasoning, shared vulnerability, and the collaborative navigation of intellectual impasses, must remain anchored in human-to-human interaction. This applies with particular force to how we assess learning.

I have written extensively about this in previous essays. My article on fourteen AI-resistant assessment methods catalogues dialogic approaches, from whiteboard defenses to structured academic debates to oral case-study defenses, that verify human reasoning in real time. And my deep dive into the Socratic seminar as assessment examines how this specific format distributes both intellectual work and evaluative criteria across a learning community, making the conversation itself the object of evaluation. I will not rehearse those arguments here, but they share a common premise with the present discussion: the capacities that AI cannot deliver are precisely the capacities we should be assessing.

AI’s role in evaluating these dialogic methods is limited. Algorithmic evaluation lacks the capacity to process the situated, relational, and emotional context of classroom dialogue. As I noted in the assessment methods essay, using a machine learning algorithm to evaluate human dialogue is conceptually akin to using a colorblind judge in an art competition. True evaluation of Socratic exchange requires what scholars call “Ethics-K,” an ethical knowledge framework that provides the indispensable human lens for equity, representation, and moral validation.

The Whetstone, Not the Gadfly

Rejecting AI as an autonomous Socratic tutor does not equate to rejecting the technology. It requires clarity about its role. The most productive framework positions AI as a “whetstone” for critical thinking rather than the source of truth. Students can use LLMs for rapid ideation, syntactic structuring, or encountering diverse textual perspectives. In the context of the Phaedrus, this is the modern equivalent of reading Lysias’ written scroll: useful as a starting point, but never a substitute for the living dialogue that follows.

Within this framework, the human educator transitions from transmitter of information to orchestrator of learning processes. The educator curates AI tools while retaining authority over context, moral implications, and dialectic synthesis. Human oversight ensures that productive discomfort does not cross into psychological alienation, and that cultural and ethical boundaries remain anchored in human values. An instructor might, for instance, have students interrogate an AI’s response to an ethical dilemma, using the model’s output as an object of Socratic inquiry rather than accepting it as a trusted tutor. The AI’s biases and blind spots then become the text that the seminar circle examines.

This human-centric model acknowledges a reality that the “AI as Socratic tutor” narrative tends to ignore: an artificial intelligence can simulate the syntax of a probing question, but only a human consciousness can recognize the significance of the answer.

Fluency Is Not Wisdom

The debate over AI Socratic tutoring will intensify as these systems become more conversationally sophisticated. Future models will generate even more convincing question sequences, and the temptation to mistake fluency for wisdom will grow. But educators must resist this confusion.

We need to acknowledge two realities simultaneously. AI-driven question prompts can improve certain learning outcomes, particularly for procedural tasks and in contexts where social anxiety impedes inquiry. These are real benefits. At the same time, these functional improvements do not establish Socratic pedagogy. They represent scaffolded information retrieval with a dialogic interface. The distance between the two is the distance between a chatbot that asks “Can you define that term more precisely?” and a human being who looks you in the eye and asks “Do you actually believe what you just said?”

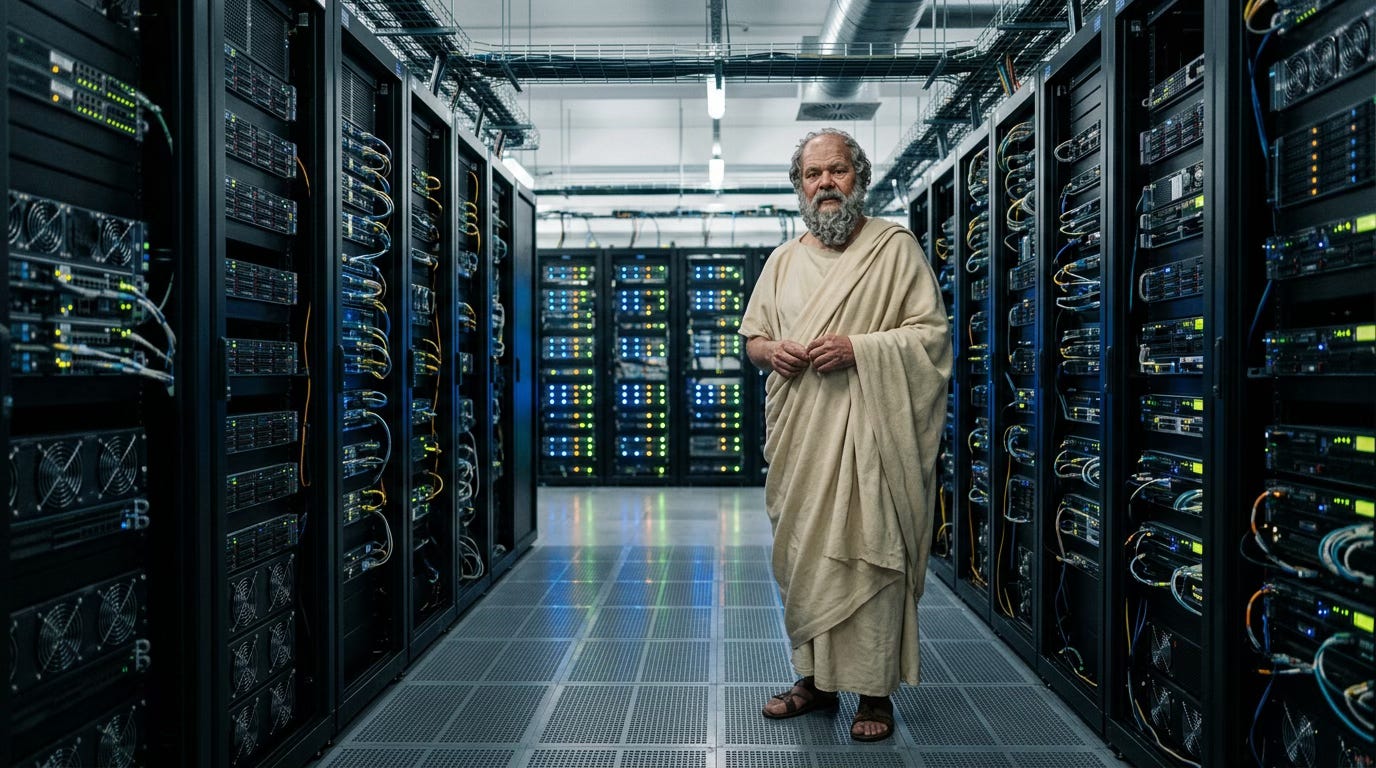

If Socrates were to walk the banks of the Ilissus today, he would undoubtedly subject our algorithmic architectures to relentless cross-examination. I suspect he would find the AI useful as a preliminary exercise, much as he found written speeches worth discussing. But when it came time for the genuine work of philosophy, the care of the soul, he would insist on what he always insisted on: a living interlocutor, capable of surprise, committed to truth, and willing to be changed by the encounter. That insistence remains the most important thing educators need to defend.

The images in this article were generated with Nano Banana 2.

P.S. I believe transparency builds the trust that AI detection systems fail to enforce. That’s why I’ve published an ethics and AI disclosure statement, which outlines how I integrate AI tools into my intellectual work.