Reframing the Stochastic Parrot

On the Limits of a Seductive Metaphor

Every few days, with the predictability of a recurring appointment, another post surfaces in my LinkedIn feed. The message is always the same: large language models are nothing more than “stochastic parrots.” They are systems that merely recombine linguistic patterns without understanding, devoid of meaning or intent. The argument has persisted for years now, cycling through the discourse with remarkable consistency. These posts arrive with the certainty of scripture, often accompanied by knowing nods about how “we need to remember what these systems really are.” The conviction is admirable. The analysis, unfortunately, is not.

I find myself uncomfortable with this narrative, not because I believe LLMs possess some mystical form of consciousness, but because the confident dismissal rests on assumptions about human cognition that contemporary neuroscience has spent the last two decades quietly dismantling. The stochastic parrot metaphor, introduced by Emily Bender and colleagues in their influential 2021 paper, offers a seductive clarity: humans understand language because we ground it in physical reality and communicative intent; machines merely manipulate statistical patterns. One has meaning; the other only form.

The problem is that this distinction requires a model of human cognition that may not exist.

The Appeal of the Stochastic Parrot

To understand why the stochastic parrot metaphor persists with such force, we need to appreciate its theoretical elegance. The argument emerges from a thought experiment known as the Octopus Test, proposed by Bender and Alexander Koller in 2020. Two humans stranded on separate islands communicate via underwater telegraph. A hyper-intelligent octopus taps the cable, observes their exchanges for months, learns the statistical patterns of their language, then cuts the cable and impersonates one of them.

The octopus can reproduce conversational patterns perfectly. When asked, “How are you?” it responds, “I’m fine, the weather is great” because this correlation appears thousands of times in the data. But when one human sends an urgent message—”I’m being attacked by a bear, what should I do with this coconut?”—the octopus fails. It knows “run” frequently appears near “bear” in text, but it cannot reason about the physical affordances of coconuts as defensive implements. It manipulates symbols without accessing the world those symbols describe.

This thought experiment captures something many of us intuitively believe about the difference between human and machine intelligence. We assume our understanding derives from embodied experience in a shared physical world, while LLMs have access only to text—a “low-bandwidth, highly compressed projection” of reality, as AI researcher Yann LeCun has argued. Text alone, the reasoning goes, cannot support genuine understanding.

The appeal extends beyond theoretical elegance. In an era of AI hype and anthropomorphic projection onto these systems, the stochastic parrot offers a needed corrective. It reminds us not to confuse fluent output with comprehension, or pattern matching with reasoning. This caution matters for how we deploy these tools in education and beyond.

But the metaphor only works if we possess a coherent account of what human understanding actually is—and whether it differs fundamentally from sophisticated pattern matching.

The Prediction Machine in Your Skull

The most potent challenge to the stochastic parrot framework comes from predictive processing theory, which has achieved something approaching consensus status in contemporary neuroscience. Associated with researchers like Karl Friston, Andy Clark, and Anil Seth, this framework re-conceives the brain not as a passive receiver of sensory information but as an active prediction engine that generates its model of reality from the inside out.

The mechanism works hierarchically. Your brain continuously generates predictions about incoming sensory data at every level: the shape of the coffee cup on your desk, the weight of your phone in your hand, the sound of approaching footsteps. When sensory input matches these predictions, the signal is “explained away” and doesn’t propagate up the processing hierarchy. Only prediction errors—moments when reality violates expectation—get passed upward to update the internal model.

This isn’t a peripheral detail about neural processing. It’s a fundamental reconceptualization of what perception is. In Anil Seth’s memorable phrase, human consciousness amounts to “controlled hallucination.” We don’t see the world as it is; we see our brain’s predictions about the world, continuously refined by error signals from our senses. The external world serves primarily to constrain and correct our hallucinations, not to write a picture directly onto our neural hardware.

The mathematical architecture bears a striking resemblance to how LLMs are trained: minimize prediction error against observed data. The brain predicts the next moment of sensory experience; the language model predicts the next token in a sequence. Both systems build statistical models of their respective input domains, continuously updating to reduce the gap between prediction and observation.

If the brain operates by predicting sensory states and updating on error, the dismissal of LLMs as mere “prediction machines” loses its critical force. We’re prediction machines too. The distinction lies in what we predict (high-dimensional sensory experience versus discrete text tokens) and how those predictions connect to the world, but perhaps not in the fundamental mechanism of intelligence itself.

Karl Friston’s Free Energy Principle attempts to unify this picture mathematically. The brain, on this account, is an organ for minimizing long-term average surprise—for building models that successfully predict sensory input. This involves the same basic objective function as training a language model: minimize the divergence between predicted and observed distributions.

This creates an uncomfortable symmetry. When we say LLMs “hallucinate,” we typically mean they generate plausible-sounding text unsupported by facts. But if human perception is controlled hallucination, constrained by sensory feedback, then both systems generate probable continuations of their training data. Ours happens to include rich sensory grounding; theirs includes the statistical structure of human language. Both are, in a technical sense, hallucinating probable next states—the difference is simply that sensory reality provides stricter constraints than text alone.

When Parrots Build World Models

The stochastic parrot metaphor assumes LLMs learn only surface-level statistical correlations without developing internal representations of the world those correlations describe. Recent research in mechanistic interpretability has challenged this assumption in unexpected ways.

Consider Othello-GPT, a language model trained exclusively to predict legal moves in the board game Othello. The training data consists only of move sequences, such as strings like “C4 D3 E3,” with no information about board states, game rules, or spatial relationships. According to the stochastic parrot framework, such a model should learn only which moves tend to follow others statistically. It should be, in effect, a move-predicting parrot.

But when researchers from MIT and elsewhere probed the model’s internal representations, they found something quite remarkable: the network had developed an emergent linear representation of the board state. By examining the model’s activations, researchers could decode with high accuracy which squares were occupied by which player at any point in the game. The model wasn’t just predicting moves based on surface patterns; it had constructed an internal model of the game world itself.

Similar findings have emerged from models trained on chess games and even abstract spatial and temporal relationships. Models trained solely on text describing spatial locations develop internal representations of those locations in a coordinate space. Models trained on time-stamped text develop representations of temporal progression. The systems appear to be building structural models of the domains described in their training data, not merely memorizing statistical co-occurrences.

This evidence directly challenges the Octopus Test’s central assumption. The thought experiment presumes that statistical exposure alone cannot yield understanding of underlying structure. But if an octopus were trained on enough Othello games—observing only move sequences, just as Othello-GPT does—the evidence suggests it would derive an internal representation of the board state and game rules. The octopus wouldn’t need to see the physical board or touch the pieces. Pure statistical exposure to move patterns would be sufficient to construct a world model.

This evidence doesn’t prove these models “understand” in any phenomenological sense. But it suggests that the distinction between “statistical pattern matching” and “world modeling” may be less clear than the stochastic parrot metaphor assumes. If a system trained purely on text can develop spatial representations, temporal models, and causal structures, the claim that text alone cannot support understanding becomes more complicated.

The philosopher Daniel Dennett spent decades arguing for a functionalist approach to cognition: if something performs all the functions of understanding, including answering questions, solving problems, making predictions, and updating on evidence, at what point does the distinction between “real” and “simulated” understanding collapse into mere terminology? The stochastic parrot metaphor wants to preserve this distinction absolutely. Empirical evidence suggests it may be a matter of degree.

The Human Parrots Among Us

Here’s where the mirror gets uncomfortable. If we examine human linguistic behavior with the same scrutiny we apply to LLMs, the boundary between “genuine understanding” and “sophisticated pattern matching” becomes harder to locate.

Cognitive scientists have documented what they call the “illusion of explanatory depth”—the phenomenon where humans confidently believe they understand complex mechanisms until asked to explain them in detail. Most people claim to understand how a toilet flushes or how a bicycle stays upright, but when pressed to articulate the causal mechanisms, they produce shallow, often incorrect explanations. We parrot the appearance of understanding without possessing the underlying model.

Consider how we use language in practice. When someone mentions Einstein’s famous equation E=mc², most of us can repeat it and roughly gloss its significance. But how many have worked through the derivation from special relativity? How many could explain the experimental evidence supporting it? We deploy scientific facts as social signals, markers of education and credibility, often without the deep understanding the stochastic parrot framework attributes to human cognition. And here’s the uncomfortable truth: LLMs are trained on precisely this data—human language that frequently serves social functions rather than rigorous truth-seeking. If we’re parroting to signal membership in educated communities, language models faithfully model that very human behavior.

This parroting extends to everyday conversation. We repeat phrases, idioms, and explanations we’ve heard without independently verifying their truth. We invoke expert consensus on topics we haven’t studied. And we use words we learned from context without looking up their precise definitions. The philosopher Ludwig Wittgenstein built an entire philosophy of language on the observation that meaning emerges from use in social contexts, not from internal mental states mapping words to world. We learn language games by playing them, not by grounding every term in direct physical experience.

The Octopus Test assumes humans possess robust causal models of physical reality that ground our language use. But research on common-sense reasoning reveals how shallow these models often are. We can navigate daily life with remarkably crude approximations of physics, biology, and mechanics. When forced to reason explicitly about unfamiliar situations—precisely the kind of challenge the octopus faces with the bear and coconut—we often resort to surface-level associations rather than deep causal reasoning.

The parallel extends to how we acquire language in the first place. Children learn language not through a systematic study of grammar and semantics but through exposure to statistical patterns in their linguistic environment. They absorb vocabulary, syntax, and pragmatic conventions by observing how words cluster together, which phrases follow questions, and how intonation signals meaning. We internalize cultural knowledge, moral intuitions, and conceptual frameworks through immersion in communities of practice. The process bears more resemblance to training a neural network on examples than to the deliberate, symbolic reasoning the stochastic parrot metaphor implicitly contrasts with machine learning.

This isn’t an argument that humans don’t understand language or lack genuine cognition. It’s an observation that the idealized model of human understanding the stochastic parrot metaphor relies upon may not describe how human minds actually work. We’re not as far from sophisticated pattern matching as we’d like to believe.

Beyond the Mirror

The stochastic parrot metaphor persists because it offers certainty in an uncertain landscape. It draws a clear line between human and machine, understanding and simulation, meaning and mere form. In an era where AI capabilities advance faster than our frameworks for making sense of them, such clarity provides comfort.

But the comfort may be false. Contemporary neuroscience suggests that human cognition emerges from mechanisms that look suspiciously like sophisticated statistical inference. We are prediction engines, building probabilistic models of our environment and updating them on error. Our understanding may rest on the same foundations we dismiss as mere “pattern matching” when we observe them in artificial systems.

This realization doesn’t diminish human cognition. It should elevate our appreciation of what statistical learning can achieve when operating at sufficient scale and complexity. The human brain processes vast amounts of multimodal sensory data embedded in physical and social contexts that provide rich training signals. Language models process text at scales and speeds beyond human capability, discovering patterns and structures invisible to conscious analysis. Both are remarkable accomplishments of prediction-driven learning.

None of this implies that human and artificial intelligence are identical. Our sensory grounding, embodied interaction with the physical world, social embedding, and evolutionary history create profound differences in how our prediction machines operate. But these are differences in architecture, training data, and implementation—not fundamental differences in kind.

The more productive question isn’t whether LLMs are “real” intelligence versus “stochastic parrots,” but what different types of cognitive architectures enable and constrain. What can systems grounded in text alone achieve, and what requires embodied, social, affective experience? How do different training objectives and data types shape the resulting capabilities? Where do human and artificial intelligence complement each other, and where do they diverge?

These questions demand careful empirical investigation rather than confident metaphorical dismissal. The stochastic parrot narrative provides clarity, but clarity purchased at the cost of accuracy serves poorly as a foundation for understanding intelligence—human or artificial.

I will keep encountering those LinkedIn posts, still arriving with the same certainty. But when I look into the mirror they hold up to AI systems, I increasingly see a reflection of our own cognitive processes. Perhaps we are all stochastic parrots, predicting probable continuations based on statistical patterns in our respective training data. The difference lies not in whether we engage in sophisticated pattern matching, but in acknowledging that this process might be precisely what intelligence is.

The question isn’t whether to accept or reject the stochastic parrot metaphor. It’s whether we’re prepared for what happens when the mirror turns back on ourselves.

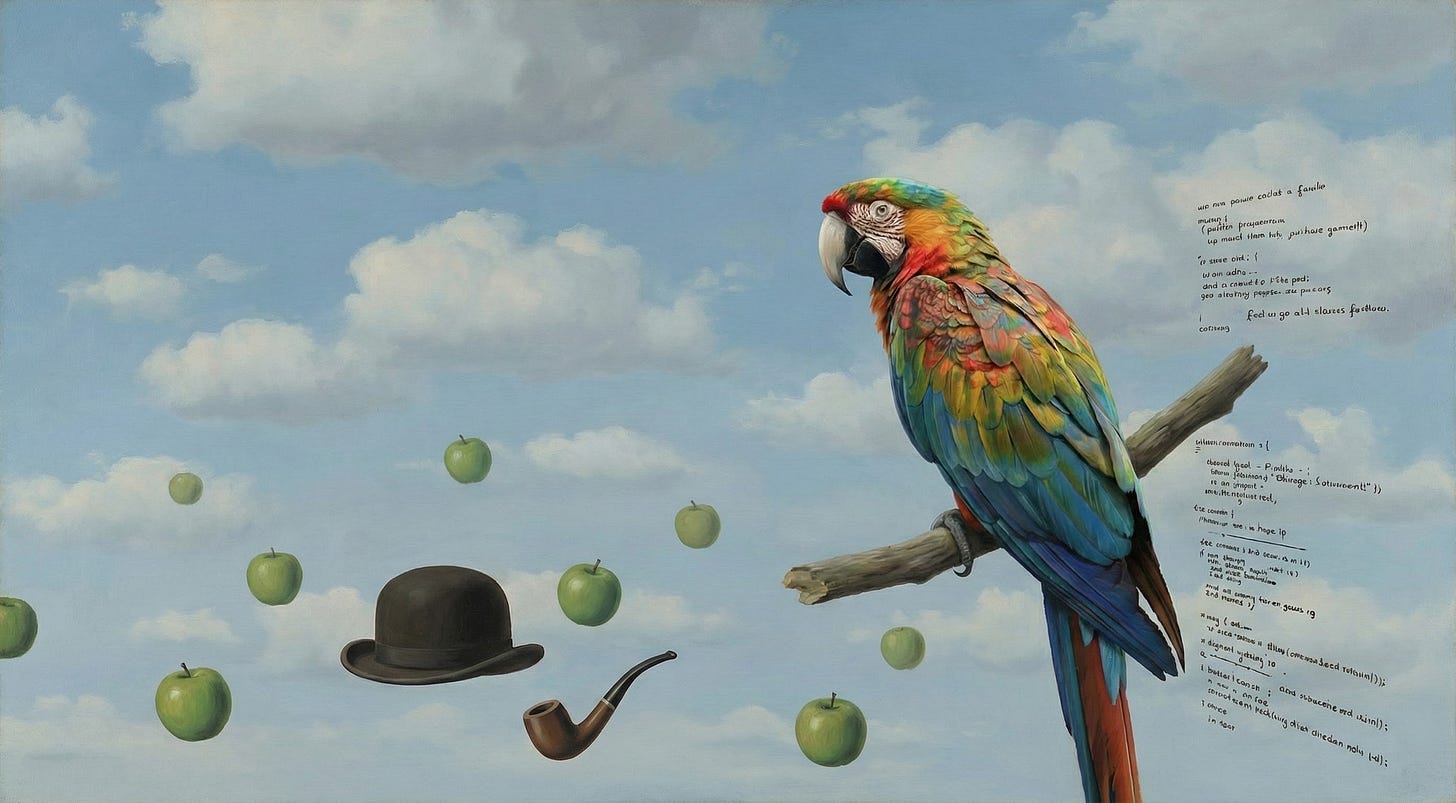

The images in this article were generated with Nano Banana Pro.

P.S. I believe transparency builds the trust that AI detection systems fail to enforce. That’s why I’ve published an ethics and AI disclosure statement, which outlines how I integrate AI tools into my intellectual work.

The practical implication of this reframing matters more than the philosophical one. If both systems are fundamentally prediction engines, the engineering challenge shifts from "how do we make AI understand" to "how do we provide better error signals."

Human cognition has sensory reality as a constraint layer. LLMs in production need an equivalent - what I call an evidence layer. Every output needs to come with sources, confidence, and conditions under which the answer would change.

The illusion of explanatory depth you mention is exactly what we see in AI-human interactions. Users trust confident outputs the same way they trust confident humans - often incorrectly. The fix isn't making AI more confident or less confident. It's making uncertainty visible and actionable.

The Othello-GPT finding is particularly relevant. If world models emerge from statistical patterns, then domain-specific constraints might be sufficient for reliable behavior - you don't need phenomenological understanding to get useful outputs.